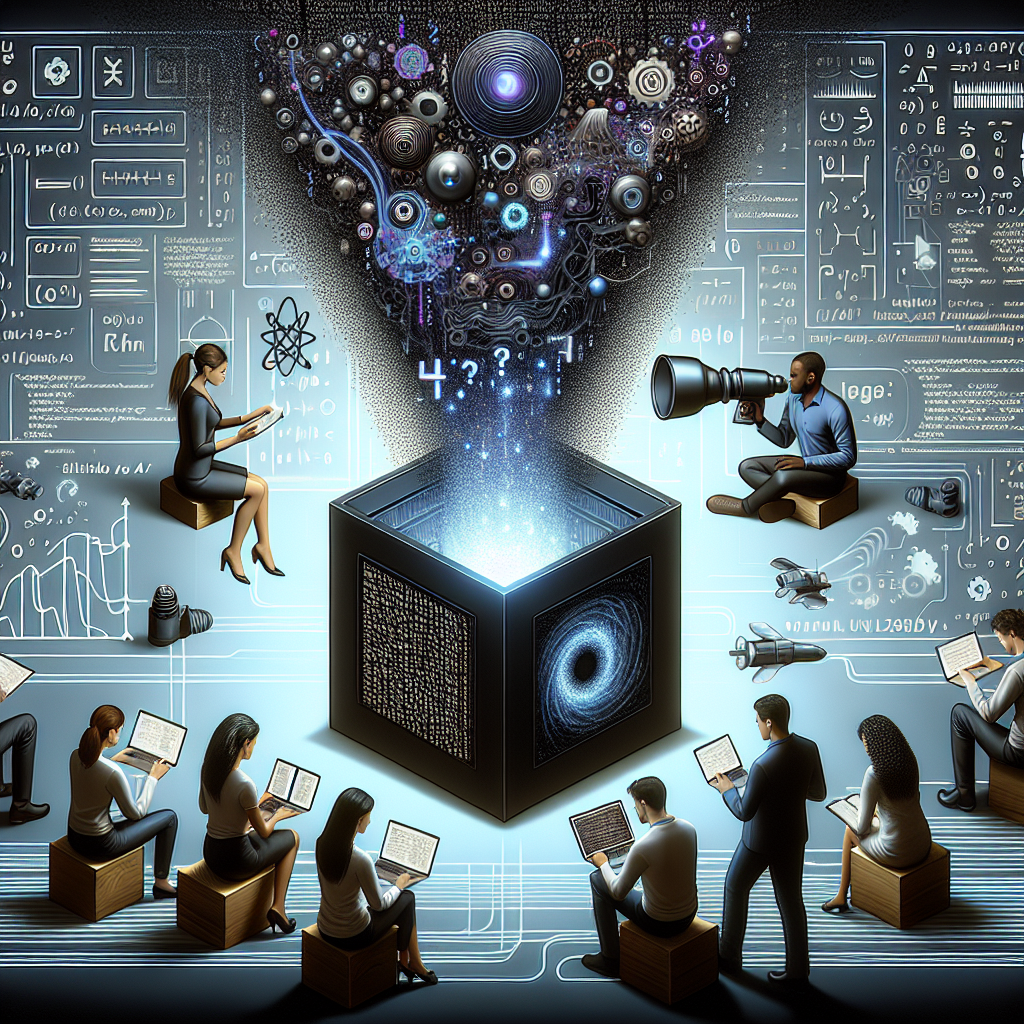

Artificial Intelligence (AI) has revolutionized various sectors, from healthcare to finance, by providing powerful tools for data analysis and decision-making. However, as AI systems become increasingly complex, they often operate as “black boxes,” making it difficult for users to understand how decisions are made. This lack of transparency raises concerns about accountability, trust, and ethical implications. In this article, we will explore the concept of Explainable AI (XAI), its importance, challenges, and real-world applications.

The Importance of Explainable AI

Explainable AI refers to methods and techniques that make the outputs of AI systems understandable to humans. The significance of XAI can be summarized in several key points:

- Trust and Adoption: Users are more likely to trust AI systems that provide clear explanations for their decisions.

- Accountability: In sectors like healthcare and finance, understanding AI decisions is crucial for accountability and compliance with regulations.

- Debugging and Improvement: Explainability allows developers to identify and rectify errors in AI models, leading to better performance.

- Ethical Considerations: XAI helps ensure that AI systems operate fairly and do not perpetuate biases.

Challenges in Achieving Explainability

Despite its importance, achieving explainability in AI systems presents several challenges:

- Complexity of Models: Many AI models, particularly deep learning algorithms, are inherently complex and difficult to interpret.

- Trade-off Between Accuracy and Interpretability: Often, more accurate models are less interpretable, creating a dilemma for developers.

- Lack of Standardization: There is no universally accepted framework for measuring and ensuring explainability, leading to inconsistencies across different systems.

- Domain-Specific Requirements: Different industries have varying needs for explainability, complicating the development of a one-size-fits-all solution.

Real-World Applications of Explainable AI

Several industries are beginning to implement XAI to enhance transparency and trust in their AI systems. Here are a few notable examples:

- Healthcare: AI systems used for diagnosing diseases must provide explanations for their recommendations. For instance, IBM Watson Health has developed tools that explain treatment options based on patient data.

- Finance: In credit scoring, companies like ZestFinance use XAI to explain why a loan application was approved or denied, helping to ensure compliance with regulations like the Equal Credit Opportunity Act.

- Autonomous Vehicles: Companies like Waymo are working on XAI to explain the decision-making processes of self-driving cars, which is crucial for safety and regulatory approval.

- Human Resources: AI-driven recruitment tools are increasingly being scrutinized for bias. Companies are implementing XAI to clarify how candidates are evaluated and selected.

Conclusion

As AI continues to permeate various aspects of our lives, the need for Explainable AI becomes increasingly critical. By enhancing transparency, accountability, and trust, XAI can help mitigate the risks associated with black-box models. While challenges remain in achieving effective explainability, ongoing research and real-world applications demonstrate the potential for XAI to transform industries. As we move forward, prioritizing explainability will be essential for the responsible development and deployment of AI technologies.

“`