`

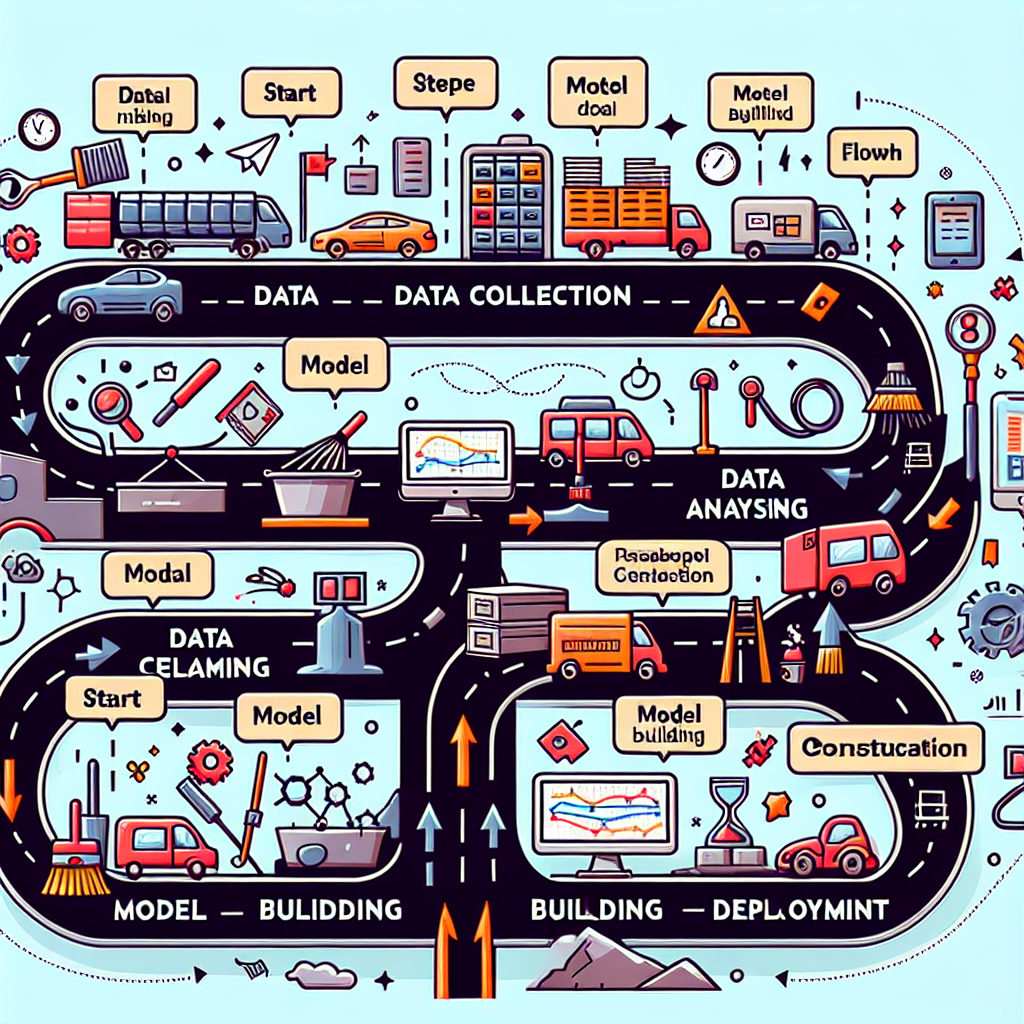

In the age of big data, the role of data science has become increasingly vital for organizations seeking to leverage data for strategic decision-making. The data science workflow is a structured process that guides data scientists from the initial stages of data collection to the final deployment of models. Understanding this workflow is essential for anyone looking to harness the power of data effectively.

Understanding the Data Science Workflow

The data science workflow can be broken down into several key stages, each critical to the success of a data science project. These stages include:

- Data Collection: Gathering relevant data from various sources.

- Data Cleaning: Preparing and cleaning the data for analysis.

- Exploratory Data Analysis (EDA): Analyzing the data to uncover patterns and insights.

- Model Building: Developing predictive models using machine learning algorithms.

- Model Evaluation: Assessing the model’s performance and accuracy.

- Deployment: Implementing the model in a production environment.

Data Collection: The Foundation of Data Science

The first step in the data science workflow is data collection. This stage involves gathering data from various sources, which can include:

- Databases and data warehouses

- APIs (Application Programming Interfaces)

- Web scraping

- Surveys and questionnaires

- Public datasets

For example, a retail company may collect data from its sales transactions, customer feedback, and social media interactions to gain insights into customer behavior. The quality and relevance of the data collected are crucial, as they directly impact the outcomes of subsequent stages in the workflow.

Data Cleaning: Ensuring Quality and Consistency

Once data is collected, the next step is data cleaning. This process involves identifying and correcting errors or inconsistencies in the data. Common tasks in this stage include:

- Removing duplicates

- Handling missing values

- Standardizing formats (e.g., date formats)

- Filtering out irrelevant data

For instance, if a dataset contains customer ages recorded in different formats (e.g., “25”, “25 years”, “twenty-five”), standardizing these entries is essential for accurate analysis. A clean dataset ensures that the insights derived from it are reliable and actionable.

Exploratory Data Analysis (EDA): Uncovering Insights

Exploratory Data Analysis (EDA) is a critical phase where data scientists analyze the cleaned data to identify patterns, trends, and relationships. This stage often involves:

- Visualizing data through graphs and charts

- Calculating summary statistics (mean, median, mode)

- Identifying correlations between variables

For example, a healthcare organization might use EDA to explore the relationship between patient demographics and treatment outcomes, leading to insights that can inform better patient care strategies.

Model Building and Evaluation: Creating Predictive Models

After gaining insights from EDA, the next step is model building. Data scientists select appropriate machine learning algorithms to create predictive models based on the data. This stage includes:

- Choosing the right algorithm (e.g., regression, classification)

- Training the model on a subset of the data

- Tuning hyperparameters for optimal performance

Once the model is built, it must be evaluated using metrics such as accuracy, precision, recall, and F1 score. For instance, a financial institution may develop a credit scoring model and evaluate its performance using historical loan data to ensure it accurately predicts defaults.

Deployment: Bringing Models to Life

The final stage of the data science workflow is deployment, where the model is implemented in a production environment. This process involves:

- Integrating the model with existing systems

- Monitoring the model’s performance over time

- Updating the model as new data becomes available

For example, an e-commerce platform may deploy a recommendation system that suggests products to users based on their browsing history. Continuous monitoring ensures that the model remains effective and relevant as user behavior evolves.

Conclusion

The data science workflow is a comprehensive process that transforms raw data into actionable insights. From data collection to deployment, each stage plays a crucial role in ensuring the success of data-driven projects. By understanding and following this workflow, organizations can harness the power of data science to make informed decisions, improve operations, and drive innovation. As the field of data science continues to evolve, mastering this workflow will be essential for data professionals aiming to make a significant impact in their organizations.

“`